By Lisa Nault

Staff Writer

Facebook has been under controversy recently over the spreading of fake news stories. People have been reading articles they assume to be factual when in reality, they are not. However, four students have created an algorithm that detects fake news stories from credited ones and allows the user to clearly see which articles they can trust.

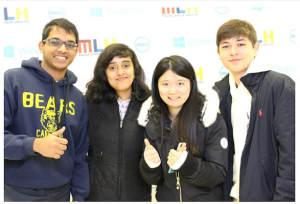

Anant Goel, Nabanita De, Qinglin Chen and Mark Craft are the creators of the fake news detector for Facebook: “FiB: Stop living a lie.” The students built this browser extension during a hackathon at Princeton University in November. It only took them 36 hours.

FiB is a Chrome based browser extension which views all of the statuses, links, pictures, and articles on a person’s news feed with artificial intelligence (AI). De explains that the AI classifies all of the information it finds and determines what can be verified and not-verified after checking other online sources.

She told Business Insider in greater detail that the AI looks into the reputation of a website, check links against malware and databases of phishing websites, and then it searches for more information using various search engines until it gathers several reliable sources to verify its decision. Once the AI determines whether an article is fake it puts a label in the top right hand corner of the post to inform the user. If a story is factual the label reads “Verified” and if not it says “Not Verified.” If the AI states that a story is false it will try to locate another source on the subject that is more valid.

FiB is also able to warn someone before they post anything on Facebook. Before they publish content, FiB will notify the user with a chatbox explaining that the information they are about to spread to others is not verified. The user then has the option to discard their post and research the topic to a greater extent or ignore the warning and post anyway. The AI uses the same process to assess an unpublished post the same way it does for the content seen in a news feed.

Despite not all going to the same school (Goel is from Purdue University, De studies at the University of Massachusetts: Amherst, and both Chen and Craft are from the University of Illinois at Urbana-Champaign) the four students created a significant and useful tool together. However, since they were participating in a hackathon they understand that they might not have had time to perfect FiB which is why they released it as an open-source project. Anybody can access FiB and either install the plug-in to begin verify Facebook content or check out the project and possibly add to it.

Ideally the students would like to see Facebook intergrade FiB into its site so that all users can be aware of unverified information and not just those who took the initiative to install the plug-in. FiB, as well as its latest features, can be downloaded from github.com.